Scaling LLMs is a different Ball Game: What I learned about LLMOps

Himanshu Manghnani

7/31/20254 min read

Over the past few weeks, I’ve been diving deeper into how generative AI products, especially those built on large language models (LLMs) like GPT, move from a simple proof of concept to something truly scalable and production ready.

If you think about it, It’s easy to build a GPT-powered agent today -> just plug in an API, add a prompt, and you have something working in minutes. But what caught my attention is what comes after the demo.

As I explored more, I came across a term that’s rapidly gaining importance: LLMOps.

Just like MLOps became essential for scaling traditional machine learning pipelines, LLMOps is emerging as the new backbone for running, monitoring, and maintaining LLM-based systems. And while they sound similar, the challenges LLMOps handles are quite different.

I wanted to share what I’ve learned so far; maybe it’ll help you think about your own GenAI project beyond the excitement of the prototype stage.

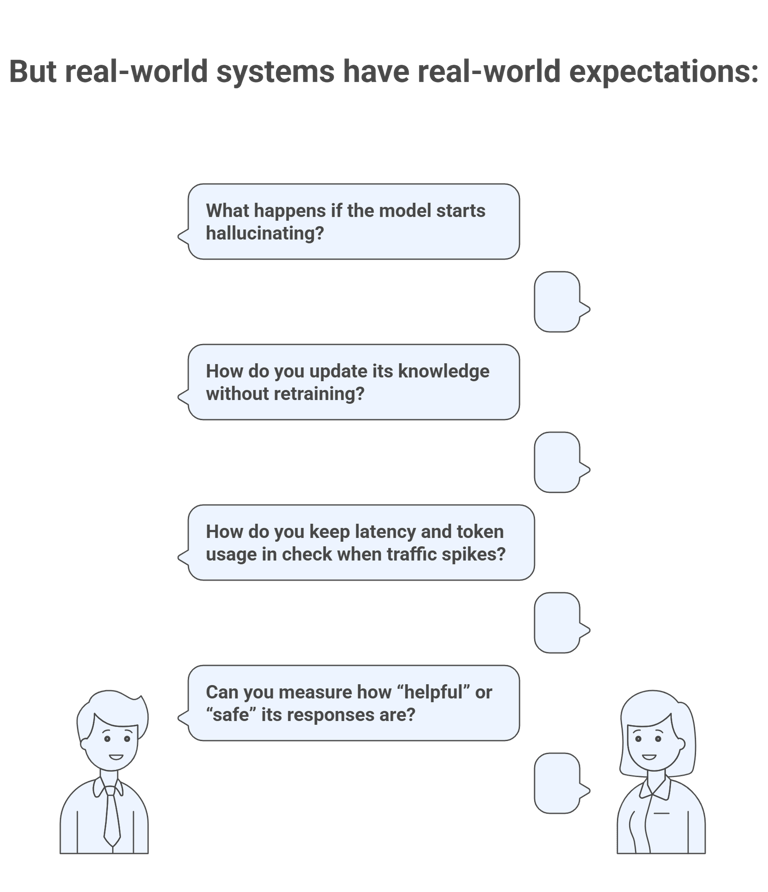

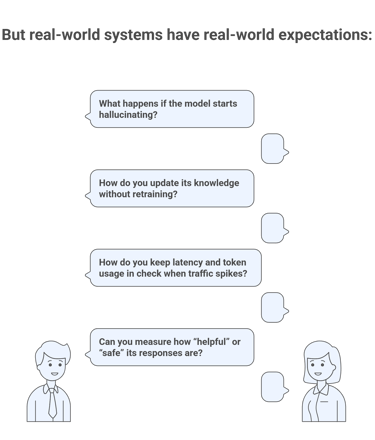

From Prompt to Product: The Operational Gap

Building a demo chatbot or AI assistant today doesn’t need deep ML expertise, APIs from OpenAI, Anthropic, or Hugging face do most of the heavy lifting. You can create a “working” agent with a few lines of code.

These are exactly the kind of challenges that LLMOps addresses!

What is LLMOps?

LLMOps refers to the operational layer required to run LLMs reliably and at scale. It covers everything that happens after you've figured out the basic prompt or interaction.

Some core components of LLMOps include:

Prompt Versioning & Experimentation: Keeping track of different prompt strategies, prompt tuning, or system messages over time.

Monitoring & Evaluation: Not just latency or usage, but qualitative aspects like hallucination rate, offensive language, answer helpfulness, etc.

Guardrails & Safety: Implementing filters, moderation layers, or validation checkpoints for generated responses.

Retrieval-Augmented Generation (RAG) Management: Ensuring the context added to prompts is accurate, relevant, and fresh.

Cost Management: Monitoring token usage, optimizing API calls, and managing inference costs at scale.

Latency Control: Making sure the experience is fast even with large models or dynamic contexts.

In short, LLMOps is not just MLOps for GenAI, it’s a new operational paradigm.

Why It Matters?

It’s no longer just about training and deploying a model. Now, it’s about maintaining orchestration around reasoning - with all the observability, latency control, and governance that comes with it.

Without LLMOps:

You may end up with high costs and no way to explain why.

Quality of responses could degrade over time as data shifts.

There’s no framework to evaluate performance systematically.

You risk launching something that’s unreliable, biased, or even unsafe.

With LLMOps:

You get better observability and control over your system.

You can iterate faster and test new features or personas.

You build trust with users by ensuring safe and high-quality outputs.

You can scale responsibly whether you’re running 100 queries a day or 1 million.

Tools Making This Easier:

As I continued exploring, I found that there are already some great tools and frameworks being used in the LLMOps space:

LangChain / LlamaIndex - For chaining prompts and building RAG pipelines

TruLens, Guardrails.ai - For evaluating output quality, reliability, and safety

OpenAI Evals / Prompt Layer - For prompt testing and version control

Weights & Biases / MLflow - Some MLOps tools now extend into GenAI tracking

BentoML, Ray Serve - For production-grade LLM deployment with APIs

And what about Databricks ?

As someone who has extensively worked with Databricks for data science and machine learning workflows, I was curious to see how their ecosystem supports LLM powered applications. After exploring their evolving GenAI toolset, it became clear that they’re not just offering surface level compatibility. They’re embedding LLMOps into the core of the Lakehouse platform in a way that’s both practical and scalable.

Here are a few components that particularly stood out to me:

Databricks Model Serving: Easily deploy open source models like Mistral or fine-tuned LLaMA variants with autoscaling and low-latency SLAs - perfect for production use cases without needing external hosting.

Unity Catalog for Prompt & Model versioning: This offers centralized governance where prompts, vector embeddings, source data, and model lineage are all auditable and secure - a big plus for enterprise workflows.

MLflow for LLM Tracking: It now supports LLM specific metadata such as prompt templates, responses, retriever parameters, and evaluation metrics - seamlessly integrated into familiar ML experiment workflows.

LakehouseIQ (Preview): An exciting addition that lets you build RAG systems with semantic search on enterprise datasets, enabling natural language querying over your structured/unstructured sources.

Vector Search with Delta Lake: Native embedding storage and similarity search integrated with metadata filtering; this makes managing your RAG pipelines far less brittle than bolt-on vector DBs.

What impressed me most is how all these components sit within one unified ecosystem. Instead of juggling multiple tools for orchestration, storage, and observability, Databricks lets you manage the full lifecycle of your GenAI stack with native support and governance which really changes the game for building enterprise-grade LLM solutions.

Final Thoughts:

Reading about LLMOps made me realize how critical this layer is for anyone serious about GenAI. It’s one thing to create a chatbot that works on your local machine, and a completely different challenge to make it work reliably for thousands of users across different scenarios and languages.

As the GenAI space matures, I believe LLMOps will become as essential and mainstream as MLOps is today. Do share your thoughts..